Matthew Broderick, star of Ferris Bueller’s Day Off was behind the wheel of a car that crashed into an oncoming car, killing two people. It happened on August 5, 1987, when Broderick and his girlfriend Jennifer Grey (just weeks before the release of her classic film Dirty Dancing) were vacationing in Ireland. Broderick was driving a rental car when he drove into the wrong lane and collided with a car driven by Margaret Doherty, 63, and her daughter Anna Gallagher, 28. Both women were killed, while Broderick was unconscious and badly injured, leaving Grey to initially believe she was the lone survivor of the accident. Upon coming to, Broderick had amnesia and didn’t remember the entire day of the accident, saying, “I don’t remember even getting up in the morning. I don’t remember making my bed. What I first remember is waking up in the hospital.”

Actor Alec Baldwin accidentally killed cinematographer Halyna Hutchins while filming the Western Rust. On Oct. 21, 2021, the small-budget production was already a chaotic mess despite only being 12 days into filming. Lane Luper, the A-camera first assistant, resigned just one day before Hutchins’ death due to the production playing “fast and loose” with safety procedures. She wrote in her resignation email: “So far there have been 2 accidental weapons discharges and 1 accidental SFX explosives that have gone off around the crew between takes… To be clear there are NO safety meetings these days. There have been NO explanations as to what to expect for these shots.” Baldwin and 25 crew members would later release a statement denying these allegations.

Actor Robert Blake, known for the films In Cold Blood and Lost Highway, as well as the TV series Baretta, was found liable for the wrongful death of his much younger wife, Bonny Lee Bakley. On May 4, 2001, Blake — after eating with Bakley at Vitello’s Italian Restaurant in Los Angeles — claims to have left his wife in the car while he ran back into the restaurant to retrieve a pistol he’d forgotten, and upon returning to his car, found her shot in the head. Police determined the gun Blake left inside the restaurant wasn’t the murder weapon, and when the eventual murder weapon was found in a dumpster, his prints weren’t on it. Still, Blake was arrested after two stuntmen came forward claiming Blake had tried to hire them to kill his wife (although their history of drug use was used against them at trial). With no forensic evidence or murder weapon to tie Blake to the crime, he was acquitted after a nearly five-month trial.

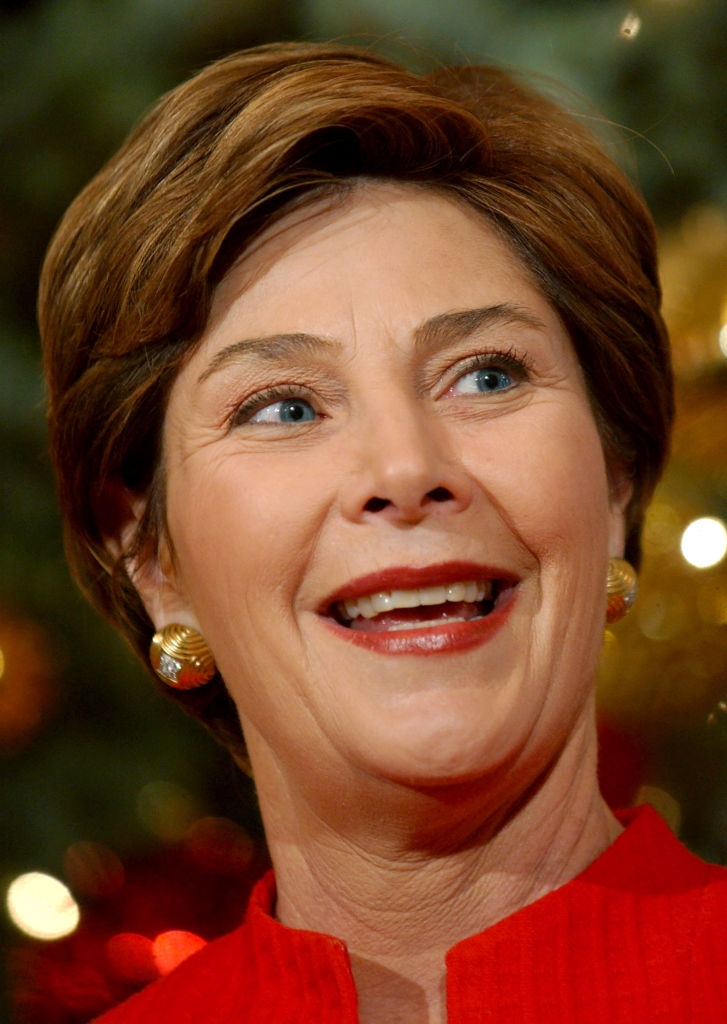

Former First Lady of the United States Laura Bush was the driver in a crash that took a teenager’s life. On the night of November 6, 1963, Laura Welch (her birth name) ran a stop sign while driving her father’s Chevrolet sedan. Her vehicle plowed directly into another car at an intersection, killing Michael Dutton Douglas. Making a sad situation even sadder, Douglas was a close friend of Laura’s with whom she’d spent hours chatting on the phone. According to police reports, Laura was not drinking, was not speeding, and was not charged. The whole thing was ruled a tragic accident. At the time, the crash didn’t make national news — she was just a teenager in a small Texas town. But when Laura became First Lady in 2001, the story resurfaced.

Sex Pistols bassist Sid Vicious was arrested for the murder of his girlfriend. On October 12, 1978, Vicious’s 20-year-old girlfriend, Nancy Spungen, was found dead on the bathroom floor of their room at New York’s infamous Chelsea Hotel. She was clad in her underwear with a single stab wound to the abdomen. Vicious, having taken 30 Tuinal pills and drunk a bottle of Jack Daniels that night, was found incoherent. When police questioned him, he first said, “I stabbed her, but I didn’t mean to kill her,” and later claimed he didn’t remember anything. The murder weapon was a Jaguar-brand hunting knife — a gift from Nancy, ironically. Vicious was arrested and charged with second-degree murder, but there were questions about whether he actually killed her.

Phil Spector, the music producer famous for his “Wall of Sound,” shot a woman to death. On February 3, 2003, the 63-year-old Spector, during a night of heavy drinking, met B-movie actor Lana Clarkson at the House of Blues in West Hollywood (she was working there as a hostess to help make ends meet). Despite Clarkson initially mistaking the slightly-built, big-haired Spector for a woman, he managed to convince her to go back to his Los Angeles mansion, where, just a few hours later, Clarkson died — shot through the mouth by Spector’s gun. Spector’s chauffeur would later testify that his boss came out of the house with a gun in hand and said, “I think I just shot somebody.” Spector initially claimed that Clarkson “kissed the gun,” but investigators determined it couldn’t have been self-inflicted.

Legendary boxing promoter Don King killed two different men. Before turning to fight promotion, King was a hustler from Cleveland running an illegal numbers racket. In 1954, a 23-year-old King shot dead a man named Hillary Brown, who was attempting to rob one of his gambling houses. That killing was ruled a ‘justifiable homicide,’ but in 1966, when King killed another man, the law wasn’t as forgiving. In that incident, King brutally beat a former employee named Sam Garrett, who owed him money, stomping on him repeatedly until police arrived. Officers testified that they found Garrett lying on the pavement, severely injured and bleeding from the head. He later died of his injuries.

William S. Burroughs, author of Junkie and Naked Lunch, shot his wife to death. Before making it in the literary world, Burroughs largely lived off a stipend from his wealthy parents while exploring his interests in writing, drugs, and sex (with both men and women). In 1951, Burroughs was living in Mexico City with his common-law wife, Joan Vollmer, and their two young children. One night, while drinking with friends, a gun in Burroughs’ hand went off and killed Vollmer. What exactly happened is unclear. Initially, Burroughs said he and his wife were performing their “William Tell act,” where he attempted to shoot a glass balanced on Vollmer’s head but missed, hitting her in the forehead. That story quickly changed to this: Burroughs had dropped his gun, and upon hitting the floor, it went off. Also rumored? That Burroughs was in love with a man and shot her on purpose.